wavlm large

par microsoft

Open source · 473k downloads · 104 likes

2.5

(104 avis)EmbeddingAPI & LocalÀ propos

WavLM Large est un modèle de traitement automatique de la parole développé par Microsoft, spécialisé dans l'analyse de signaux audio échantillonnés à 16 kHz. Entraîné sur un vaste corpus de 94 000 heures de données audio en anglais, il excelle dans des tâches variées comme la reconnaissance vocale, la classification audio ou la vérification du locuteur après un fine-tuning adapté. Son approche repose sur une architecture basée sur HuBERT, optimisée pour capturer à la fois le contenu phonétique et les caractéristiques du locuteur, ce qui le rend particulièrement performant sur des benchmarks comme SUPERB. Contrairement à d'autres modèles, il nécessite une adaptation supervisée pour des applications concrètes, car il a été pré-entraîné de manière auto-supervisée sans tokenizer intégré. Ce modèle se distingue par sa polyvalence et sa capacité à traiter des tâches complexes de traitement de la parole, tout en restant limité à l'anglais pour des performances optimales.

Documentation

WavLM-Large

The large model pretrained on 16kHz sampled speech audio. When using the model, make sure that your speech input is also sampled at 16kHz.

Note: This model does not have a tokenizer as it was pretrained on audio alone. In order to use this model speech recognition, a tokenizer should be created and the model should be fine-tuned on labeled text data. Check out this blog for more in-detail explanation of how to fine-tune the model.

The model was pre-trained on:

- 60,000 hours of Libri-Light

- 10,000 hours of GigaSpeech

- 24,000 hours of VoxPopuli

Paper: WavLM: Large-Scale Self-Supervised Pre-Training for Full Stack Speech Processing

Authors: Sanyuan Chen, Chengyi Wang, Zhengyang Chen, Yu Wu, Shujie Liu, Zhuo Chen, Jinyu Li, Naoyuki Kanda, Takuya Yoshioka, Xiong Xiao, Jian Wu, Long Zhou, Shuo Ren, Yanmin Qian, Yao Qian, Jian Wu, Michael Zeng, Furu Wei

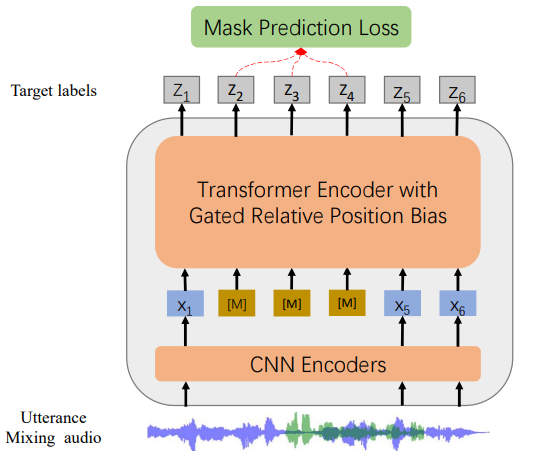

Abstract Self-supervised learning (SSL) achieves great success in speech recognition, while limited exploration has been attempted for other speech processing tasks. As speech signal contains multi-faceted information including speaker identity, paralinguistics, spoken content, etc., learning universal representations for all speech tasks is challenging. In this paper, we propose a new pre-trained model, WavLM, to solve full-stack downstream speech tasks. WavLM is built based on the HuBERT framework, with an emphasis on both spoken content modeling and speaker identity preservation. We first equip the Transformer structure with gated relative position bias to improve its capability on recognition tasks. For better speaker discrimination, we propose an utterance mixing training strategy, where additional overlapped utterances are created unsupervisely and incorporated during model training. Lastly, we scale up the training dataset from 60k hours to 94k hours. WavLM Large achieves state-of-the-art performance on the SUPERB benchmark, and brings significant improvements for various speech processing tasks on their representative benchmarks.

The original model can be found under https://github.com/microsoft/unilm/tree/master/wavlm.

Usage

This is an English pre-trained speech model that has to be fine-tuned on a downstream task like speech recognition or audio classification before it can be used in inference. The model was pre-trained in English and should therefore perform well only in English. The model has been shown to work well on the SUPERB benchmark.

Note: The model was pre-trained on phonemes rather than characters. This means that one should make sure that the input text is converted to a sequence of phonemes before fine-tuning.

Speech Recognition

To fine-tune the model for speech recognition, see the official speech recognition example.

Speech Classification

To fine-tune the model for speech classification, see the official audio classification example.

Speaker Verification

TODO

Speaker Diarization

TODO

Contribution

The model was contributed by cywang and patrickvonplaten.

License

The official license can be found here

Liens & Ressources