UAE Large V1

by WhereIsAI

Open source · 1M downloads · 237 likes

3.0

(237 reviews)EmbeddingAPI & LocalAbout

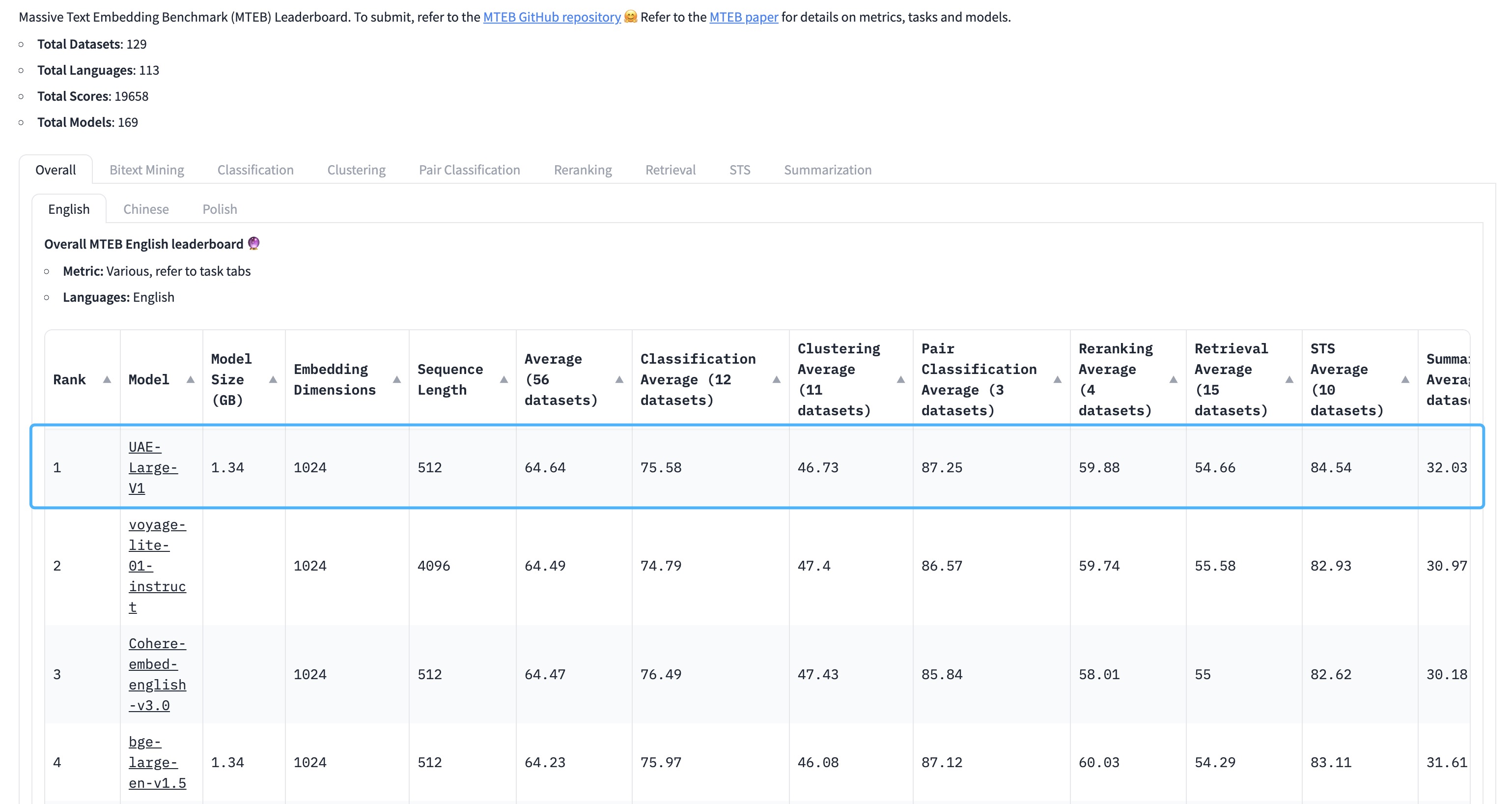

UAE Large V1 is a universal text embedding model designed to generate precise and versatile vector representations of English sentences. It excels particularly in tasks requiring fine-grained semantic understanding, such as text similarity or information retrieval, thanks to an angle-based optimization that enhances discrimination between embeddings. The model stands out for its state-of-the-art performance on recognized benchmarks, notably achieving an average score of 64.64 on the MTEB leaderboard, making it a reliable tool for a wide range of applications. Its use cases include similarity analysis, text classification, and integration into advanced search systems. Unlike more specialized models, UAE Large V1 offers remarkable flexibility, suitable for both academic and industrial needs.

Documentation

Universal AnglE Embedding

📢 WhereIsAI/UAE-Large-V1 is licensed under MIT. Feel free to use it in any scenario.

If you use it for academic papers, you could cite us via 👉 citation info.

🤝 Follow us on:

- GitHub: https://github.com/SeanLee97/AnglE.

- Preprint Paper: AnglE-optimized Text Embeddings

- Conference Paper: AoE: Angle-optimized Embeddings for Semantic Textual Similarity (ACL24)

- 📘 Documentation: https://angle.readthedocs.io/en/latest/index.html

Welcome to using AnglE to train and infer powerful sentence embeddings.

🏆 Achievements

- 📅 May 16, 2024 | AnglE's paper is accepted by ACL 2024 Main Conference

- 📅 Dec 4, 2023 | 🔥 Our universal English sentence embedding

WhereIsAI/UAE-Large-V1achieves SOTA on the MTEB Leaderboard with an average score of 64.64!

🧑🤝🧑 Siblings:

- WhereIsAI/UAE-Code-Large-V1: This model can be used for code or GitHub issue similarity measurement.

Usage

1. angle_emb

Bash

python -m pip install -U angle-emb

- Non-Retrieval Tasks

There is no need to specify any prompts.

Python

from angle_emb import AnglE

from angle_emb.utils import cosine_similarity

angle = AnglE.from_pretrained('WhereIsAI/UAE-Large-V1', pooling_strategy='cls').cuda()

doc_vecs = angle.encode([

'The weather is great!',

'The weather is very good!',

'i am going to bed'

], normalize_embedding=True)

for i, dv1 in enumerate(doc_vecs):

for dv2 in doc_vecs[i+1:]:

print(cosine_similarity(dv1, dv2))

- Retrieval Tasks

For retrieval purposes, please use the prompt Prompts.C for query (not for document).

Python

from angle_emb import AnglE, Prompts

from angle_emb.utils import cosine_similarity

angle = AnglE.from_pretrained('WhereIsAI/UAE-Large-V1', pooling_strategy='cls').cuda()

qv = angle.encode(Prompts.C.format(text='what is the weather?'))

doc_vecs = angle.encode([

'The weather is great!',

'it is rainy today.',

'i am going to bed'

])

for dv in doc_vecs:

print(cosine_similarity(qv[0], dv))

2. sentence transformer

Python

from angle_emb import Prompts

from sentence_transformers import SentenceTransformer

model = SentenceTransformer("WhereIsAI/UAE-Large-V1").cuda()

qv = model.encode(Prompts.C.format(text='what is the weather?'))

doc_vecs = model.encode([

'The weather is great!',

'it is rainy today.',

'i am going to bed'

])

for dv in doc_vecs:

print(1 - spatial.distance.cosine(qv, dv))

3. Infinity

Infinity is a MIT licensed server for OpenAI-compatible deployment.

Bash

docker run --gpus all -v $PWD/data:/app/.cache -p "7997":"7997" \

michaelf34/infinity:latest \

v2 --model-id WhereIsAI/UAE-Large-V1 --revision "369c368f70f16a613f19f5598d4f12d9f44235d4" --dtype float16 --batch-size 32 --device cuda --engine torch --port 7997

Citation

If you use our pre-trained models, welcome to support us by citing our work:

INI

@article{li2023angle,

title={AnglE-optimized Text Embeddings},

author={Li, Xianming and Li, Jing},

journal={arXiv preprint arXiv:2309.12871},

year={2023}

}

Capabilities & Tags

sentence-transformersonnxsafetensorsopenvinobertfeature-extractionmtebsentence_embeddingfeature_extractiontransformers

Links & Resources